4 Designing for Values

Key Themes & Ideas

- Technology is always value-laden, resulting from value judgments and affecting values in the world

- Value Sensitive Design is an approach to design that aims to explicitly consider values early and throughout the design process

- In the context of technological design, anyone affected by a technology is a stakeholder

- A Stakeholder Analysis is a Value Sensitive Design technique used to identify stakeholders, values, and arguments related to a technology

- A Value Hierarchy is a Value Sensitive Design technique that can help us incorporate values into our designs, by moving from abstract values to concrete design requirements

- Design Principles are general requirements for a design project that must be met, but can typically be met in more than one way

- Design Requirements are specific requirements for a design project that typically indicate exactly how to design some feature of the project

Previously we saw that technology mediates our lives by influencing our perceptions of the world and our actions in the world. More broadly, because of these mediating effects, technology influences society in myriad ways. Once we understand this, it becomes clear that technological artifacts are not mere instruments for human will; they shape human will as well.

But there is further reason to reject the idea that technology are mere instruments. Or, to put the issue in slightly different terms: technologies are not value neutral. The instrumentalist view of technology holds that technologies have no values or orientation toward values “built” into them; technologies do not affect values all on their own. Instead, on the instrumentalist view, technologies only affect values once people start using them for specific purposes, based on whatever value considerations those users have. Although it now serves a variety of social and political purposes in the United States, the phrase “guns don’t kill people, people kill people” largely reflects the view that technologies are value neutral. Part of the claim being made when someone uses that phrase is that guns, on their own, do not alter personal or social values and that guns don’t encourage or discourage certain types of perceptions, beliefs, and actions.

In contrast to this instrumentalist idea of value neutrality is the claim that technology is always value-laden: soaked through with value considerations. There are always values built into technologies and technologies always exert some influence over human thought and action and thus influence what they take to be valuable. We have already seen the outcomes of this in our discussion of technological mediation. But now we can backup a bit and think about how the technological design process itself ensures all technology is value-laden.

All technologies are the products of human minds and actions. They are the result of numerous decisions, big and small, by the people crafting them. And all decisions are made on the basis of values, outlooks, perspectives, intentions, and desires. In this way, it is impossible to truly and fully divorce a technology from the humans that designed it and its social context. The design process necessarily involves establishing a (loose and often implicit) hierarchy of value considerations. Take a simple consumer electronic device: are we going to prioritize accessibility and therefore sacrifice quality in order to reduce cost? Or are we going “high-end”, knowing we will be designing a product that is out of reach for many people? Whatever answer we give, we are now identifying which values we care about more than other values. And whatever design decisions we make after that will be influenced by those value preferences.

So, building technology is all about design choices, these choices are based in (often implicit) value preferences, and these choices impact values in the world. We rarely make technology just for the sake of it; we make technology because we expect to deploy it out into the world, where it will interact with all different kinds of people and be used in many different contexts. Therefore, technology will be better when it is designed in a way that is thoughtful about the values it embodies and is informed by the social, institutional, and cultural contexts in which it is to be used. Technologies that are designed in a value sensitive way are more intuitive, more accessible, more seamless, and more delightful than those that are not. They are also more likely to do good: promote human flourishing, generate social benefits, and contribute to environmental sustainability. They are more likely to work effectively, and they are more likely to be successful.

In short, by engaging in Value Sensitive Design technology professionals can better meet their professional responsibilities, design better technologies, and contribute to positive social change.

Examples of Values in Technology

There is a constant stream of news stories about controversial, ethically-problematic technologies. Below are a few, brief examples. These cases illustrate the importance of designing technologies in a value-informed way that includes careful consideration of the social and institutional contexts of deployment and use:

- Highways have the potential to help people travel faster and increase access to certain areas. However, their construction often alters the social landscape by dividing neighborhoods, increasing noise pollution, and altering traffic patterns in ways that can both increase and decrease business in nearby areas.

- Facial recognition systems have the potential to make our lives more convenient through automatic face tagging on social media, facial unlock on smartphones, etc. but also have profound implications for individual privacy.

- Smart speakers are extremely popular, but users were upset to learn that transcripts of their audio were secretly being reviewed by human beings. The key issues were (1) that users were not informed about this practice, and (2) audio recordings from within our homes are considered by most people to be sensitive data.

- Sharing economy apps that let anyone rent out their car or home are very convenient for those with resources to offer and those looking to rent, but they are also having negative impacts on cities in the form of increased roadway congestion and displacement of local residents.

- You pay for ‘free’ online services by divulging your personal data, which is collected by hundreds of advertising companies. People are often distressed at the extent to which this data can be used to hyper-target advertising, as best evidenced by the Cambridge Analytica scandal.

- The impending rollout of self-driving cars raises challenging questions about how these cars should be designed to ensure human safety, and how these vehicles, their makers, and their operators should be held accountable when accidents occur. Even more challenging ethical questions arise when we consider the development of autonomous military drones.

- Many organizations are adopting machine learning systems in an effort to reduce costs and remove human bias from processes. These systems evaluate whether people are eligible for loans, insurance, employment, social services, and even parole. However, machine learning systems are not neutral, and these systems have been found to exhibit human biases like racism and sexism.

- User interfaces are powerful mechanisms for shaping how users interact with systems. However, some designers adopt intentionally deceptive user interfaces called “dark patterns”.

1. What is Value Sensitive Design

We know that technologies are value-laden and reflect value judgments. This is true whether designers explicitly consider values or not. But, once we know that technologies will be influenced by values and will influence values, it would be irresponsible to not explicitly consider values in the design process. Just like once we know that technology has mediating effects, we ought to deliberately account for them in our design thinking, once we know that technology is value laden we ought to deliberately consider values in our design thinking. And that is precisely what Value Sensitive Design is all about.[1]

Value Sensitive Design (or VSD) is an approach to identifying and grappling with value-laden design decisions. Originally developed by the computer science professor Batya Friedman, VSD has been widely used across technology disciplines, from civil engineering to surveillance to human-robot interactions.

The central goal of VSD is to help technology professionals make socially-informed and thoughtful value-based choices in the technology design process. At a high level, VSD helps us to:

- Appreciate that technology design is a value-laden practice

- Recognize the value-relevant choice points in the design process

- Identify and analyze the values at issue in particular design choices

- Reflect on those values and how they can or should inform technology design

VSD is, in effect, an outlook for seeing the values in technology design and a process for making value-based choices within design. It encourages us to account for a variety of often conflicting values and concerns. Additionally, it accepts that there are rarely easy answers to moral questions and so does not provide an algorithmic approach to resolution. Instead, it provides a variety of tools and questions that form the process and which, when done conscientiously and through engagement with others, can often lead to improved technological design.

There are far too many tools and techniques under the banner of Value Sensitive Design to cover them all. So, instead, we will focus on two that can be useful in nearly every design context and that have the added benefit of being useful tools for learning about ethics in general.

2. The Stakeholder Analysis

According to the Association of Computing Machinery’s Code of Ethics and Professional Conduct, “all people are stakeholders in computing.”[2] We can reasonably extend this idea to say that all people are stakeholders in technology. By this we mean that everyone is affected, to some degree, by technologies. This is obvious for those who engage with the technologies, but it is also true for those who may never engage with them. Consider, for instance, the massive piles of e-waste in Western Africa. Or, similarly, consider that air pollution cannot be contained to the areas in which it was produced and so anyone could be negatively affected by it, no matter where they live.

The idea of a stakeholder is familiar to many business contexts, where it typically is focused on those entities that have a financial stake in the company or project. But in Value Sensitive Design, picking up on the technology professional’s obligation to the welfare of the public, a stakeholder is anyone that may be affected, directly or indirectly, by the technology or technological project under consideration. It is also common to include non-human animals and environmental systems as potential stakeholders. Whether we expand stakeholders in this way or not, however, the key is that for our purposes stakeholders always fit broadly in the category of “the public”. We do not, in contrast to the business context, care about the company designing the technology or investors in it. Our aim is to design the best product or project for the public, and so our stakeholders are limited to the public.

One of the core tools of VSD is the Stakeholder Analysis. This sort of analysis is typically done very early in the design process – perhaps before any design decisions have even been made – but is continually re-examined and updated as the process continues. The core function of a stakeholder analysis is to provide a thorough understanding of who may be affected (positively or negatively) by the technology as well as the values that are affected, or perceived to be affected, by the technology. Put another way, a stakeholder analysis is a tool for designers to “get out of their own head” in order to more comprehensively understand “what is at stake” with a technology. Any given designer may already be able to identify some relevant values for the project, but they may also miss some, understand some differently from members of the public, or (rightly) believe some values aren’t relevant that some members of the public nonetheless do believe are relevant. And it matters that they believe a technology may affect a value, even if it won’t. For instance, if people wrongly believe that some new technology will negatively impact their privacy, they may not adopt it. Knowing some stakeholders see it this way can help designers innovate in ways that eliminate the (false) view and therefore increase the success of the technology.

Another way of understanding the aim of the stakeholder analysis is to see it as a tool for mapping the “argumentative terrain” of a technology. We are trying to take account of all the possible arguments in favor and against designing the technology and designing it in particular ways. Sometimes we do this by noting a particular group that may be negatively affected by the technology. That the group would be negatively affected may count against designing the technology in a particular way or may count in favor of designing it in some other way. Other times we may do this by identifying a key value that will be impacted by the design. Once we know that value, we can start to ask how we can design the technology to promote or protect it or to limit negative effects on it.

Importantly, exactly how we divide up “stakeholders” will depend on the context. In all cases, our goal isn’t to identify specific individuals – Nancy, Nikea, Bill, etc. – but rather to identify the various ways people may relate to the technology. For instance, if we are conducting a stakeholder analysis for a consumer electronic product we may distinguish between “Power users” and “casual users”. People falling into these two groups will have different preferences about the device; power users would benefit from greater customization features while casual users would prefer ease of use even at the expense of capabilities. We might also distinguish between “early adopters” and “late adopters” of the product, if we can identify differences between their preferences or the values they associate with the technology. Importantly, too, notice that the very same individual could be both a “casual user” and an “early adopter”. This is a major reason we focus on these categories (or roles as they are often called) rather than named individuals; most people will fall into multiple stakeholder categories.

The 4 stakeholder categories mentioned above all fall under the umbrella of direct stakeholders. These are stakeholder groups that will typically be using or interacting with the technology. For many (but not all) technologies “direct stakeholder” effectively means “user”. But most technologies also affect people who will never themselves interact with the technology (or will only do so minimally or incidentally). Many large-scale public works projects are like this. Very few people are direct stakeholders for a powerplant. But a significantly number of people are affected (or potentially affected) by the powerplant. We could, for instance, identify “nearby residents” as a stakeholder category relevant to the design of the powerplant. The powerplant would likely affect the health of these people, which is an important value. These sorts of stakeholder categories are typically called indirect stakeholders.

It is important to keep in mind that the distinction between direct and indirect stakeholders is not about the degree to which they are affected by a technology. The impact of a technology on indirect stakeholders can be just as great, if not greater, than its impact on direct stakeholders. The distinction is about the pathway of impact: does it come from interaction with the technology or does it come from others’ use of the technology or, more generally, the mere existence of the technology.

Our central goal in conducting a stakeholder analysis is to produce a reasonably comprehensive picture of all the various ways people may be affected by the technology and how they will be affected. But, of course, there may be an infinite number of potential stakeholder roles relevant to a technology, and even if we could identify them all our list would be so long as to be useless. Thus, there are a few general considerations to keep in mind when conducting a stakeholder analysis that can help increase the likelihood of capturing the important stakeholders and values while not getting bogged down.

First, our goal in identifying a stakeholder group is to recognize the unique way(s) they may be affected by the technology. We are not identifying groups just for the sake of it. So, for instance, if we are unable to find any meaningful difference between the values and preferences of “early adopters” and “late adopters” of the technology then we do not need to identify those stakeholder groups at all. Keeping this in mind can help reduce the size of our list.

Second, if we keep in mind that a stakeholder analysis is a means of laying out the “debate landscape” for the technology – identifying the various arguments in favor and against particular ways of designing it – then that can further reduce the inevitable length of our list. We do care about the stakeholders in their own right, but we are also identifying them as a means of identifying relevant values. I distinguish “power users” from “casual users” in large part because those groups care about different values (or, more precisely, prioritize different values) when it comes to our technology. And so, although the typical stakeholder analysis process moves from stakeholder to value and argument, we can also work backwards. We might, first, identify a value or an argument relevant to our design context and then try to identify a stakeholder group that the value or argument fits with.

Finally, it is worth noticing the various means by which we may go about identifying stakeholders, values, and arguments. In some cases, we may bring together a “focus group” of individuals who represent various stakeholder groups. Or, similarly, we may talk to various individuals to just get their view. But we may also consult studies and argumentative essays (or videos). Existing attitude surveys can be a useful tool for identifying general perspectives on a technology and argumentative essays can be vital for fully filling out how values or stakeholder groups will be affected and what that means for the design of the technology.

In sum, a stakeholder analysis is a vital tool for taking seriously the responsibility to the welfare of the public. It prompts us to think broadly about the effects of a technology, especially when we consider indirect stakeholders. And it also helps us identify values that may be unintentionally affected by the technology. In these ways, and more, it functions as a tool of responsible technological design and a resource for innovation.

3. The Value Hierarchy

A stakeholder analysis helps us identify relevant values. And, beyond that, we will hopefully already have an idea of what values we want the technology to promote or protect. But VSD is not just about initial value identification, it is also about taking values seriously in the design process. One valuable technique for doing that is the Value Hierarchy.

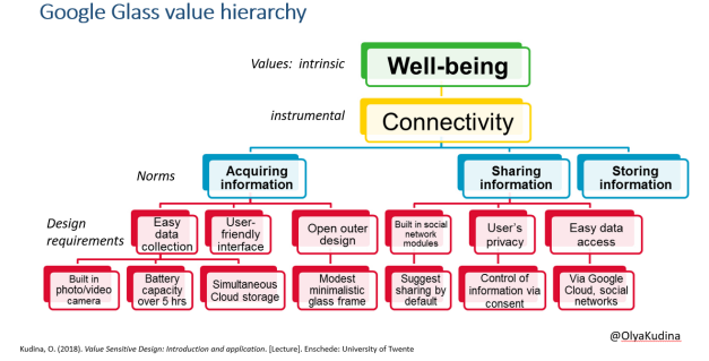

A Value Hierarchy is a technique we can use to systematically implement a value into our designs. It is a means of operationalizing values so that they become the sort of thing that a product or project can implement. And they typically result in a visual hierarchy construction, like the one below for Google Glass.

The example hierarchy for Google Glass illustrates the 4 main components of a value hierarchy:

- Intrinsic/Overarching Value: Represented by “well-being” in the example, this top of our hierarchy is where we identify the core value we are trying to design for. The idea of an “intrinsic” value is that it is something we care about for its own sake, as opposed to something we care about as a means to an intrinsic value.

- Instrumental Value(s): Represented by “Connectivity” in the example, this section of our hierarchy identifies one or more values that we care about because they can (in the context) help us protect or promote our intrinsic value. Strictly speaking, this level of the hierarchy is not always necessary, but is often helpful.

- Design Principles: Called “norms” in the example and represented by the items in the blue boxes, design principles broadly highlight the sorts of things our technology should be able to do or not do without specifying precisely how they will be designed to do or not do those things.

- Design Requirements: The most “concrete” level of the hierarchy, represented by the many red boxes in the example, design requirements are the (relatively) precise design features of our technology. They provide the sort of information that we can directly use in designing our product or project.

This tells us, in brief, what a value hierarchy contains, but we will go into more detail for each of these components below. Before that, however, it is worth noting that there is one sense in which all technological designers should already be familiar with value hierarchies. As noted in an earlier chapter, it is often suggested that engineers “have a special concern for safety”. Thus, engineers are always expected to build a value hierarchy with safety as the value. Of course, this is just typically done without explicitly constructing the value hierarchy. Nonetheless, we could place safety as a value – likely an instrumental value with something like well-being or health as the intrinsic value. In standard engineering thinking, safety is often further specified into context-specific design principles. For instance, when constructing something like a bridge we must consider the “factor of safety”, which is effectively a design principle. It says something like “the bridge should be able to hold five times its expected actual load”. Of course, as any engineer familiar with bridge construction could tell you, there are several different ways to construct a bridge to meet that safety factor. Thus, that the bridge must be designed to a 5x safety factor does not immediately give us any concrete design requirements. But it does tell us that we will need to identify design requirements that will implement that design principle.

And so, in a certain sense, the value hierarchy is no different from standard technical design thinking. What is different, however, is that whereas “designing for safety” is the foundational non-negotiable of all engineering, a value hierarchy helps us focus on how to design for other values that are not foundational in the same way and are typically (at least when it comes to the instrumental values) specific to our design context.

To more fully understand how to conduct a value hierarchy, as well as its value, we can turn to examine the components of the hierarchy in more detail.

3.1. Values

The first chapter of this textbook introduces you to the concept of a value. So, we will not cover that again. However, given the centrality of values to a value hierarchy and the fact that values are divided into two different levels in the value hierarchy, it is worth investigating their role in this technique in a bit more detail.

There are some things that we care about for their own sake and other things we care about because they are a means to something else. Well-being is the quintessential intrinsic value, something we care about for its own sake. While something like wealth is a prime example of an instrumental value. Having money is not valuable in its own right (although some people certainly act as if it is!). Rather, if being wealthy is valuable at all, it is valuable because it can help us protect or promote other values, like well-being. There are, as well, some values which are both intrinsic and instrumental. Health and Education are prime examples. It is good in itself to be healthier and more educated, but also being healthier and more educated is a means to many other good things in life.

There is no easy way to distinguish between instrumental and intrinsic values. People will disagree over some cases. But, in general, when it comes to instrumental values we can ask the question “and why does that matter?” and there should be a good answer (although we may not be able to immediately give it). When it comes to intrinsic values, however, there is no real answer to that question. In response to the question “why does well-being matter?” the appropriate answer just seems to be “because it does” and anyone who says otherwise is either being obstinate or living in a very different world from us.

The good news is that, for our purposes, we do not need to worry too much about the distinction between intrinsic and instrumental values. Not all value hierarchies contain both. Instead, for our purposes, we can often simply focus on what would otherwise be instrumental values. In the Google Glass case, we are interested in designing for “connectivity”. That is certainly not an intrinsic value; if it matters at all, it matters because it can promote well-being (as well as perhaps other intrinsic values). But, in the context of the value hierarchy, connectivity is our focus: we derive our design principles from thinking about connectivity, not well-being (at least not directly).

Thus, just as standard technical design involves thinking about the (instrumental) value of safety and identifying design principles related to it, value sensitive design involves thinking about instrumental values and identifying the design principles related to them. We can, however, keep the idea of intrinsic values in the back of our mind to ensure that what we are identifying as an instrumental value is valuable at all.

3.2. Design Principles

Design principles are perhaps the most difficult component of a value hierarchy. They need to be simultaneously more precise and context-specific than the value(s) we are designing for and less precise and context-specific than design requirements. They are an intermediate category that, broadly, captures a goal we are trying to achieve. Using our designing for safety example, our safety factor design principle can be restated as the goal of achieving a design that can withstand 5x the expected actual weight. Unfortunately, the examples in the Google Glass hierarchy are not the most illustrative, but they can be restated in a better way. To promote the value of connectivity, we should design our product to be able to acquire information, to be able to share information, and to be able to store information.

Another example of a value hierarchy focuses on the design of aviaries for chickens. It identifies “animal welfare” as the value (without worrying about whether it is intrinsic or instrumental) and then provides design principles like “hens should have sufficient living space”, “hens should be able to lay their eggs in laying nests”, and “hens should have the freedom to ‘scratch’ and to take ‘dustbaths’”.[3] These sorts of design principles are better examples of what design principles can be, laying out general requirements for the design of the aviary without demanding precisely how the aviary be built to meet those general requirements. For instance, while “having sufficient living space” does specify a minimum space requirement it does not specify a maximum. Thus, aviaries could be designed in various sizes and shapes while meeting the principle.

The example of “sufficient living space” highlights another common feature of design principles that distinguishes them from design requirements. In the context of the aviaries, the minimum requirement for “sufficient” was specified by the relevant EU law that imposed these design principles. But it did need to be specified, and it could have been specified differently. Design principles often (but not always) include terms or are otherwise phrased in such a way that they need interpretation and specification. This is what makes them principles rather than (for instance) rules or requirements. Importantly, of course, while design principles (or parts of them) may be open to some interpretation and specification, that doesn’t mean anything goes. Instead, they typically prompt us to conduct appropriate research in the appropriate field. For the aviary, this meant digging into research on animal welfare and cage sizes for chickens. Similarly, we could have a technical design principle that simply says “must meet or exceed the safety factor” without specifying what the safety factor is. In doing that, we are simply prompting the relevant designers to look at the appropriate laws, building codes, and/or engineering standards to make the determination.

As should be clear by now, specifying values into design principles is a complex process. It requires technical knowledge, social knowledge, and conceptual knowledge. But there are a few things to keep in mind that can help with the process:

- We should always have a relatively precise understanding of the value we are working to specify before we start to craft design principles. Thus, we should not move from “connectivity” to design principles without first clarifying what we mean by “connectivity”.

- Design principles are prescriptive: they tell us what we should do (or not do) in order to protect or promote the relevant value(s). As such, it can often be helpful to start all principles with something like “the product/project should…” or “the product/project should not…”. Principles must provide direction, even as they should not specify precise requirements.

- A design principle should be an appropriate response to the relevant value and it should, perhaps in concert with other design principles, constitute a sufficient response to the relevant value. A sufficient response means fully responding to the demands of the value, rather than only responding to parts of it.

3.3. Design Requirements

Design principles are much more immediately useful in the technical design process than values. Values can be complex, nuanced, and difficult to apply to design decisions. Design principles, on the other hand, give us direction to our designs. Thus, once we have our design principles, we can move into the iterative design process with those principles in mind. To do that, however, we need to begin translating our principles into design requirements.

As previously noted, design requirements represent the most “concrete” part of our value hierarchy. If our design principle is to “adhere to the safety factor” for our bridge, then the design requirements that implement that principle would be things like “support pillars every 20 feet” and “steel construction” (perhaps specified further to identify relevant properties of the steel). These design requirements are the sorts of things that directly make their way into our product or project. If the Google Glass should store information for easy retrieval (a design principle), then it can implement this principle with the use of a Cloud storage service built into the device (a design requirement). Similarly, in the aviary design example, the principle of “sufficient living space” might by specified into the requirements to have at least 450 square centimeters of floor area per hen. This now provides us a precise requirement for our overall design (even as it admits of allowing us to go beyond it).

There are two important features of the move from design principles to design requirements that we should keep in mind. The first is that just as we typically need multiple design principles to sufficiently specify our value, we may also need multiple design requirements to fully satisfy a single design principle. In the aviary example, in fact, “sufficient living space” is translated into 4 design requirements. Simply identifying the minimum floorspace is insufficient, we also have to think about space at the feeding trough, height of the living space, and slope of the living space. Similarly, to fully specify our design principle of adhering to the safety factor, we probably need to specify both the number of support pillars and the appropriate construction materials. Just doing one of those things would not fully meet the demands of the principle.

The second feature is that sometimes a single design requirement may be relevant to adhering to multiple design principles. For instance, in the EU’s guidelines on chicken aviaries, it not only provides a design principle related to the size of the living space. It also includes the principle that hens should be able to rest on perches. Design requirements specifying the size of those perches simultaneously help us implement the perch principle and the sufficient living space principle. This can obviously be valuable from the perspective of simplicity in design. However, it is typically better to first try to lay out how to meet each principle independently and then, on a later review, identify potential overlaps or synchronicities.

4. Conclusion: Engaging in Value Sensitive Design

This chapter has introduced an approach to design known as Value Sensitive Design. The overall goal of this approach is to account for values early in the design process and throughout the design process. To do that, we examined two important VSD tools: the stakeholder analysis and the value hierarchy. There are many more tools in the VSD toolkit beyond these two, but together they provide useful techniques for taking seriously the value-laden nature of technology and technological design.

Check Your Understanding

After successfully completing this chapter, you should be able to answer all the following questions:

- What does it mean for technology to be value laden? How does that compare to the idea of technology as value neutral?

- As a general approach to design, what is Value Sensitive Design?

- In the context of engineering and technology, what is a Stakeholder? What is the difference between Indirect and Direct Stakeholders? You should be able to provide an example of each

- What is the purpose of a Stakeholder Analysis?

- In general, what is a Value Hierarchy and what is its purpose in technological design?

- What is the difference between an intrinsic and an instrumental value? What is an example of each?

- What are Design Principles? How do they relate to values? What is an example of a Design Principle?

- What are Design Requirements? How do they relate to Design Principles? What is an example of a Design Requirement?

References & Further Reading

Friedman, Batya & David G. Hendry (2019). Value Sensitive Design: Shaping Technology with Moral Imagination. MIT Press.

van de Poel, Ibo (2013). “Translating Values into Design Requirements,” in Philosophy and Engineering: Reflections on Practice, Principles and Process, edited by D.P. Michelfelder et al. Springer Press.

- Much of what is said here is derived from or inspired by Christo Wilson’s VSD@Khoury website. https://vsd.ccs.neu.edu ↵

- Association of Computing Machinery (2018). “ACM Code of Ethics and Professional Conduct,” https://www.acm.org/code-of-ethics ↵

- Ibo van de Poel (2013). “Translating Values into Design Requirements,” in Philosophy and Engineering: Reflections on Practice, Principles and Process, edited by D.P. Michelfelder et al. ↵